My Neighbor Totoro starts with one summer day in the Japanese countryside. Tatsuo and his two daughters, Satsuki and Mei, arrive in a rattling truck along a winding road, pulling up to a dusty, creaky house that has been empty just long enough. They’ve moved to be closer to the girls’ mother, who is recovering in a nearby hospital. Over the course of the summer, Satsuki and Mei wander through fields and forests, drifting just beyond the tree-lined edges of the ordinary, until they encounter Totoro, a silent (but sensationally cute) spirit of the woods. As they befriend this friendly fuzzball, their quaint countryside quest turns into a hushed wonderland that has invited many viewers like me into a childhood imagination unburdened by urgency or expectation.

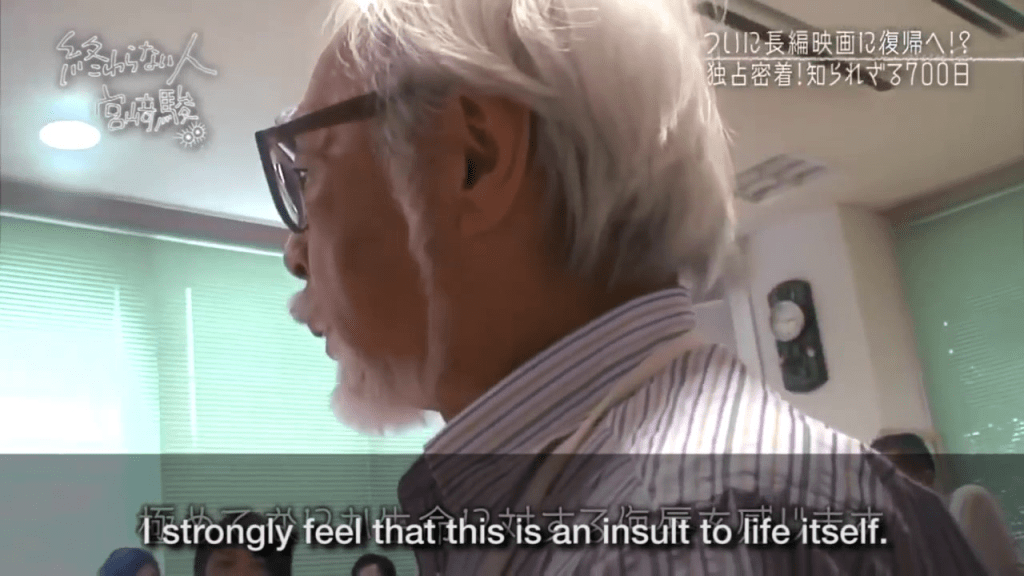

Between the release of Totoro (1988) and pre-ChatGPT era (2022), Miyazaki directed eight more films, each earning both domestic and global acclaim. Over the same period, even as digital tools became more standard in media production, the prevailing view persisted that creativity was difficult to automate and remained a uniquely human trait (Why AI Can’t Take Away Creative Jobs – Forbes, 2024). In 2016, during an NHK documentary, Miyazaki was shown an AI demo designed to generate hard-to-animate movement. Though technically novel, Miyazaki dismissed it as “an insult to life itself”, with visible discomfort.

For much of the pre-GenAI era, this almost spiritual view around creativity was widely shared. In 2013, Carl Benedikt Frey and Michael Osborne, an economist and AI researcher at the University of Oxford, published “The Future of Employment“, a widely cited study in the 2010s that shaped policy and public debate on automation. They argued that creative intelligence constitutes an inherent “engineering bottleneck” and is therefore unlikely to be automated. Within this framework that preceded the advent of LLM or agentic AI, automation was expected to primarily affect routine and rule-based tasks, while domains requiring originality, social interaction and complex perception would remain uniquely human and comparatively resistant.

However, this distinction began to blur in the early 2020s with the emergence of GenAI. What first startled many was AI’s ability to produce coherent, context-aware text through LLMs. The pace since then has been difficult to track. Within two years of ChatGPT’s launch, OpenAI’s 4o image generation tool started generating Ghibli inspired images and memes, causing Sam Altman to tweet this. What at first appeared as a shockwave of novelty did not remain confined to memes. It began to unsettle the foundations of the media industry that trade in imagination itself. In Hollywood, the Writers Guild of America went on a strike in 2023 demanding protections against the use of generative AI to replace or diminish human writers, or used to undermine compensation and authorship.

The breakthroughs observed (or threats perceived) in the creative industry were not isolated in art and media as it stemmed from a deeper shift in how AI systems learn and generate outputs. At the core of this progress is the development of foundation models that became exponentially better at large scale training based on vast body of text, image and data. The models were no longer executing on explicitly programmed rules but instead applying statistical patterns across domains in order to generate plausible outputs across a wide range of contexts. Models trained on large image and text datasets have become able to generate stylistically coherent artwork and texts. In the meantime, in Silicon Valley and San Francisco, models trained on massive code repositories started producing functional code, suggest implementations and assist in debugging. Starting in 2026, I am seeing more and more software engineer friends here transitioning from individual contributors to managers of Claude agents. If you are curious, take a look at this article (Silicon Valley programmers are now barely programming).

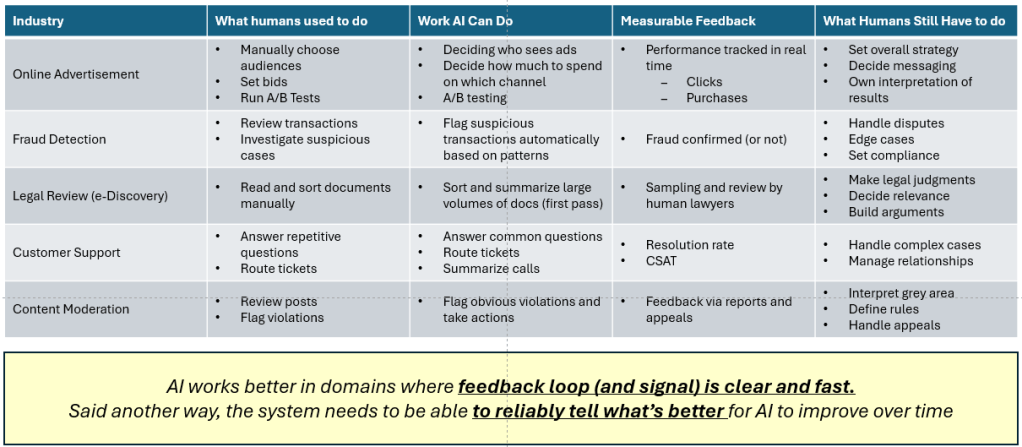

A similar pattern is happening outside of the industries that California dominates as well. Here are a few industries with rapid AI adoption in addition to creative industry and software engineering:

One common feature across domains with productive AI adoption is the presence of a clear and fast feedback loop, allowing systems to generate and iterate on “good-enough” first drafts of work. However, the second-order effects become slightly more nebulous as workflows scale and reliance on AI increases without proportional oversight and human insights.

In software engineering, for example, tools such as GitHub Copilot, Codex, and Claude promise meaningful productivity gains by accelerating code generation. Yet emerging evidence suggests that these gains may be overstated. In some cases, developers who report feeling faster are, in practice, slower when accounting for the additional time required to review, validate, and correct AI-generated code (Developers Feel 20% Faster With AI. They’re Actually 19% Slower). This highlights a key tension: AI reduces the time required to produce an initial output, but also has increased the effort required to ensure its correctness and reliability. In effect, AI has shifted the bottleneck from production to judgment.

In the meantime, the rapid increase in speed has created strong incentives for key decision makers to commit early to the automation-led productivity gain. While productivity improvements are easier to quantify by measuring output volume in a given time period, the cost of verification, reduced depth of understanding and the systemic error that compounds in the background have been more difficult to observe and quantify. As speed and progress have become more synonymous, the system also has nudged us towards abundance of mediocrity, not progress.

In many high-stakes domains (think running a company, M&A, public policy, biology, etc.), “good” is rarely clean. It involves tradeoffs, externalities and asymmetric risks that don’t fully fit into a loss function. AI can generate options and even suggest better objectives, but it won’t choose for us which object should be adopted, nor absorb the downside risk when it’s wrong. That moment, where options turn into commitments, requires human authorship. Even in cases where AI surpasses human intuition, such as chess, Go, or even Alphafold’s protein folding breakthrough, the system works because the objective “good” is tightly specified, thus can be iterated / optimized far beyond human capability. These breakthroughs did not arrive because AI decided what matters, but because it was in a domain where we could give what “good” means, and then AI executed at scale within that frame.

In any decision-making process, there comes a moment when options turn into commitments: capital is deployed, code is set into production, a treatment is administered, a contract is signed. It is in this moment that judgment bears its full weight. It requires not only the balancing of tradeoffs and the consideration of stakeholders, but also an acceptance of uncertainty itself and the burden of consequences, especially those that arrive unbidden and unevenly borne. AI may surface possibilities and enumerate risks, but it cannot internalize them. AI’s responsibility will not scale with compute. In the end, value has to accrue to whoever underwrites such risk, not whoever produces ideas.

In the age of AI, progress will not be driven by the superiority of human intelligence, but by our authorship of which systems to employ and to define what “good” is worth pursuing. This places increasing value on those who can articulate standards, make tradeoffs, and stand behind their decisions. We must invest in more people capable of defining and defending what “good” looks like when it is not given, but chosen.

Miyazaki’s refusal to adopt AI-generated art has never really been a denial of what the technology’s capabilities. As a matter of fact, the images produced by AI are often striking, and even beautiful. Rather, his stance reflects something else: a commitment to authorship grounded in human experience. In this choice lies a distinction that cannot be optimized away.

As systems grow ever more capable, the question before us will be less about how something can be produced, but what ought to be produced, and under whose name it is done. In the end, it is not the abundance of mere outputs that defines our progress, but the care with which we decide what is worth making and our willingness to bear the cost of having made it.

Leave a comment